With the many developments underway in the field of brain-computer interfaces, we’re really only scratching the surface of what’s possible. One of the bigger questions at the outset of these developments is the choice of invasive or noninvasive approaches to how the electrodes are deployed on or in the human subject. The advantages of EEG headsets, for example, include their noninvasive nature and convenience. But for more rigorous applications, they suffer significant limitations, which are being addressed now most visibly by the likes of Elon Musk, Mark Zuckerberg, and Bryan Johnson in the course of their respective work in brain-computer interface initiatives.

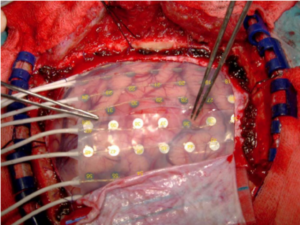

An electrocorticography (ECoG) grid, implanted in patients about to undergo epilepsy surgery, enables researchers to record and transmit electrical signals to and from the surface of the brain. Image courtesy of Mark Stone/University of Washington.

The Problem with EEG Headsets

Because the use of external EEG headsets is like holding hands while wearing boxing gloves, we really need to get the electrodes under that signal-attenuating skull, where they can make a more intimate connection with the brain. Optimally, you’d like to slide the electrodes in just under the dura—one of the membrane layers that separates the skull from the brain. ECoG (electrocorticography) is one such technology, where electrodes sit in direct contact with the surface of the brain, enabling them to register electrical activity from the cerebral cortex with much greater sensitivity and resolution.

Mind Reading with Optical Imaging

If opening up your skull to slip in a set of ECoG electrodes is not an attractive option for you, Facebook’s mind-reading scheme sits outside the skull (like an EEG headset), but uses optical imaging to do the job. Facebook’s objective is to engineer a system that will allow people to telepathically text at 100 words per minute—considerably faster than we can type on a smartphone. And they want it to be inexpensive, wearable, and scalable.

Electrocorticography grid placement.

The technology—actually decades old—is called “fast optical scattering” and involves beaming photons through the skull and into the brain, then measuring the reflected signal. Enough of the light gets through to penetrate the skull, bounce off neurons, and return through the skull to an external sensor—the kind of sophisticated sensor technology that benefits from work funded by the US Department of Defense. What kinds of information can this system capture? It turns out that neural signals associated with words are active across the surface of the brain. With the help of machine learning, which correlates patterns of the recorded neural activity with word meanings, the system can, in fact, read your mind. Facebook is high on the optical technology, as they claim it is the only noninvasive technique capable of providing both the spatial and temporal resolution needed for practical use.

Dr. Mary Lou Jepsen, however, thinks the idea sucks. She worked on the technology at Facebook’s secretive Building 8, and as executive director of engineering the Oculus program. Previously, she was an executive at Google’s X “moonshot factory,” where she reported directly to Sergey Brin. She also has a better idea about how to get thoughts out of one’s head, but it too is no less a moonshot. Her current endeavor is Openwater, a startup aiming to reduce a room-sized $5M MRI machine to a wearable product at a consumer electronics price point. An MRI in a ski cap. In other words, a thousand times lower in cost and a million times smaller in size. Is that possible?

Jepsen’s “thinking cap” is the equivalent of an MRI at a consumer price point.

“What I try to do,” Jepsen explains, “is make things that everybody knows are utterly, completely impossible; I try to make them possible.” Not surprisingly, Jepsen takes great pleasure in living on the “hairy edge of what the physics will do.”

She takes the name of her company from a line in an essay written for Edge by Peter Gabriel—inspired by Jepsen’s work—who observes, “The essence of who we are is contained in our thoughts and memories, which are about to be opened like tin cans and poured onto a sleeping world. Inexpensive scanners would enable all of us to display our own thoughts and access those of others who do the same.” fMRI data shows that this is possible. “We’re getting close,” Jepsen says. “These are no longer crackpot ideas—and we’re leveraging the tools of our time to do it. We just have to up the resolution by 100X.” In doing so, Jepsen’s novel approach relies on remarkably simple technology: near-infrared liquid crystal displays and camera chips.

“We can focus infrared light,” she explains, “through the skull into the brain with neuron-size resolution—one micron—which means that for the first time, we can noninvasively see the activity of neurons. And we think we can capture the activity of a million neurons per second. I know we’ve got a hundred billion neurons, but there’s a $100 million prize if you can just get to one million per second.

“So what we’re doing with lowly LCDs and camera chips and infrared light, and of course good software and AI, is astonishing. So yes, we can replace MRI scanners—and actually get better resolution.”

Jepsen is also sensitive to other shortcomings of current MRI technology. “People are dying because access to MRI is very expensive,” she explains. “MRI is not used as a first line of defense, for example, against breast cancer—even though it is the best tool for detecting it—because it is too expensive. People are getting diagnosed at stage three or stage four, which means three years or more of expensive pain and suffering. So if we can make MRI machines small, cheap, and available everywhere, we can catch diseases earlier.” The important applications in healthcare, though, represent only intermediate products on the way to the really big prize Jepsen is after: telepathy. It turns out that the same technology that can help image cancer can also help image thoughts. “If I throw you into an MRI machine right now,” she says, “I can tell you what words you’re about to say. I can tell you what images are in your head. I can tell what music you’re thinking of. I can tell if you’re listening to me or not. That’s possible with an MRI, now.”

Researchers at Yale use an fMRI and a well-trained deep learning algorithm to visually reconstruct faces seen by test subjects.

But again, it’s not practical. While fMRI is currently the best method for measuring human brain activity it does not measure neural activity directly, but rather changes in blood flow, blood volume, and blood oxygenation that are caused by neural activity. “Neither MRI nor fMRI can look directly at the neurons,” Jepsen says, “but we believe we can.” All mental processes have a neurobiological basis. As such, it should be possible to “decode” the visual content of mental processes like language, dreams, memory, and even imagery. And this could, of course, impact virtually every field.

“What if,” Jepsen speculates, “you could simply think of a new object, and the computers, the robots, and 3D printers could prototype it instantly? And then melt it down to enable iteration. What if we could dump images out of our heads? What if a movie director woke up one morning with an idea for a scene and dumped it to a computer so he could share it with the production team? Imagine how this could scale up to work on the big problems of the world, and to unleash creativity. Humanity has been through many automation transitions over the centuries, and we have always found new paths to blaze.”

Speaking of scaling up, Jepsen sees the noninvasive aspect of the technology she’s developing as paramount. “I’ve had non-optional brain surgery,” she says. “I don’t see that scaling. Therapeutic applications are one thing, but that is not a mass product.”

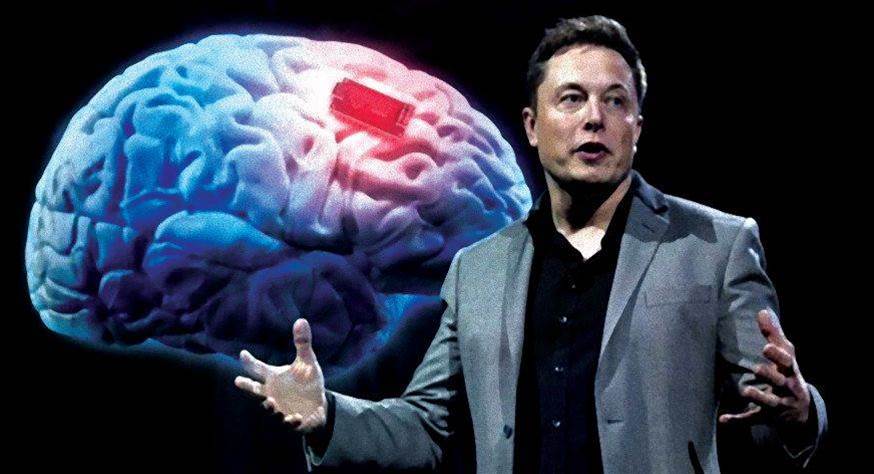

Neural Dust

All of this is in sharp contrast to the uber-invasive scheme that Elon Musk is envisioning with his company, Neuralink. His idea is to inject the brain with thousands of tiny silicon chips—neural dust—that record and reflect neural information using ultrasound. Like the other players in this nascent field, the end goal is telepathy. But along the way, the technologies can also be exploited as “electroceuticals” for myriad medical applications, because they are also able to stimulate nerves and muscles to treat disorders such as epilepsy. In its telepathic context, neural dust will not only speed up typing, but enable the control of robots, prosthetics, home automation devices, and any number of other targets of the brain-machine interface. In fact, Musk believes that such technology will be de rigueur, if for no other reason than to help humans keep up with AI-enabled competitors.

As to how this would work, the basic architecture is not unlike what you already have in your smartphone. Have you ever used your mobile wallet to make a contactless payment? That’s made possible by RFID tuned for near-field communications. When your phone is in range of a point of sale terminal equipped with an RFID reader, a wireless communication protocol links the POS and the RFID chip in your phone, information is exchanged (the passive RFID chip in your phone “backscatters” its data to the reader), and the deal is done. Neural dust employs essentially the same operational concept. Think of the dust as the RFID chips that communicate with a reader, implanted just beneath the skull. But instead of using radio frequency as the carrier wave, neural dust uses ultrasound. It turns out that radio waves don’t travel well through human tissue (and they can also tend to heat things up—not good), but ultrasound waves, as any expectant mother knows, do. Ultrasound is also necessitated by the nanoelectromechanical nature of the dust: their small size makes for a better fit with the shorter wavelengths (millimeter scale) of ultrasound. And, because this whole scheme involves stuffing gear inside one’s head, it also makes for a much smaller reader. (By the way, the reader must also be subcranial in order to get around the skull’s strong attenuation of ultrasound. But it is powered easily enough by an external transceiver via RF power transfer.)

But what, exactly, is all this neural dust communicating? Well, here’s where things get really clever. Each bit of dust, also called a mote, is able to measure the electrical activity in nearby neurons. When ultrasound waves emitted by the reader impinge upon the mote, its piezoelectric crystal begins to vibrate in sympathy. That vibration is then converted into a voltage level that powers the mote, which also includes a tiny transistor that is pressed against a neuron. As the electrical activity in the neuron varies (as it does with thought), it actually changes the amount of current flowing through the transistor, which in turn alters the vibration of the crystal and, consequently, the intensity of the ultrasonic energy it reflects (or backscatters) back to the reader, which is constantly listening for those returned signals. The characteristics of this reflected signal are what encodes the neuron’s activity. Finally, a deep learning algorithm then takes in all this streaming neural data and makes sense of it, ultimately driving the brain-interfaced device of choice. Crazy enough?

Closing the Loop

Now, reading thoughts is one thing, but how do we close the loop by feeding thoughts back from a third party in a way that a human can understand? Think “cochlear implant.” After all, one invasive technology deserves another!

In this case, the output of the AI process is converted to sound and sent into the cochlear implant, which bypasses the normal hearing process. Via a sound processor worn behind the ear, an API transmits signals to the implant, which in turn stimulates the cochlear nerve, causing it to send signals to the brain, creating the experience of sound. A complete input/output BCI system. What’s more, the AI could also throw real time language translation into the bargain—and, of course, also do your typing. Will it also wash your windows?

This article is based on an outtake from the book, Moonshots, by Naveen Jain, with John Schroeter.

This article is based on an outtake from the book, Moonshots, by Naveen Jain, with John Schroeter.