The idea of controlling robotic operations with nothing more than brainwaves is the ultimate expression of the man-machine interface. The introduction of machine learning to detect actionable brainwave signatures—and translate them into commands a robot or other actuator can comprehend—opens up new and potentially unlimited vistas into possibilities for revolutionizing everything from the factory floor to the kitchen to the self-driving car. A simple experiment zeros in on one such brain signal: the error-related potential, and demonstrates its use in a basic robotic task.

***

Researchers at Boston University’s Guenther Lab and MIT’s Distributed Robotics Lab teamed up to investigate how brain signals might be used to control robots, pushing the possibilities of human-robot interaction in more seamless ways. While capturing brain signals is difficult, one signal in particular bears a strong signature, and is thus a bit more accessible to headset-based (non-invasive) electrode detection.

The error-related potential (ErrP) signal is generated reflexively by the brain when it perceives a mistake, including mistakes made by someone else. What if this signal could be exploited as human-robot control mechanism? If so, then humans would be able to supervise robots and immediately communicate, for example, a “stop” command when the robot commits an error. No need to type a command or push a button. In fact, no overtly conscious action would be required at all—the brain’s automatic and naturally-occurring ErrP does all the work. The advantages of such an approach include minimizing user training and easing cognitive load.

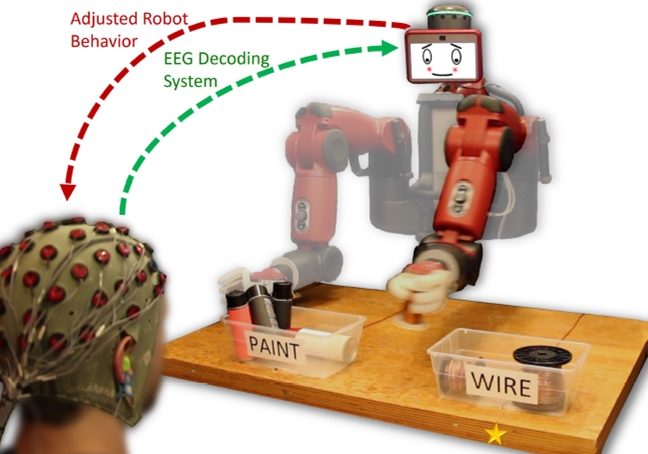

To these ends, the team’s work explored the application of EEG-measured error-related potentials to real-time closed-loop robotic tasks, using a feedback system enabled by the detection of these signals. The setup involved a Rethink Robotics Baxter robot that performs simple object sorting task under human supervision. The human operator’s EEG signals are captured and decoded in real time. When an ErrP signal is detected, the robot’s action is automatically corrected. Thus, the error-related potentials are shown to be highly interactive.

Figure 1. The robot is informed that its initial motion was incorrect based upon real-time decoding of the observer’s EEG signals, and it corrects its selection accordingly to properly sort an object, in this case, a can of paint or a spool of wire.

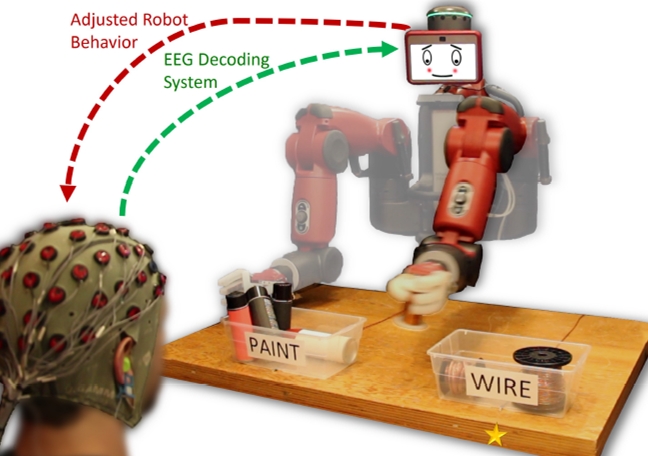

Another attractive aspect of the ErrP is how easily it is generalized for any application that could be controlled by it, again, without requiring extensive training or active thought modulation by the human. This is because ErrPs are generated when a human consciously or unconsciously recognizes that an error—any error—has been committed. It turns out that these signals may actually be integral to the brain’s trial-and-error learning process. What’s more, these ErrPs exhibit a definitive profile, even across users with no prior training, and they can be detected within 500 ms of an error detection.

Figure 2. Error-Related Potentials exhibit a characteristic shape across subjects and include a short negative peak, a short positive peak, and a longer negative tail.

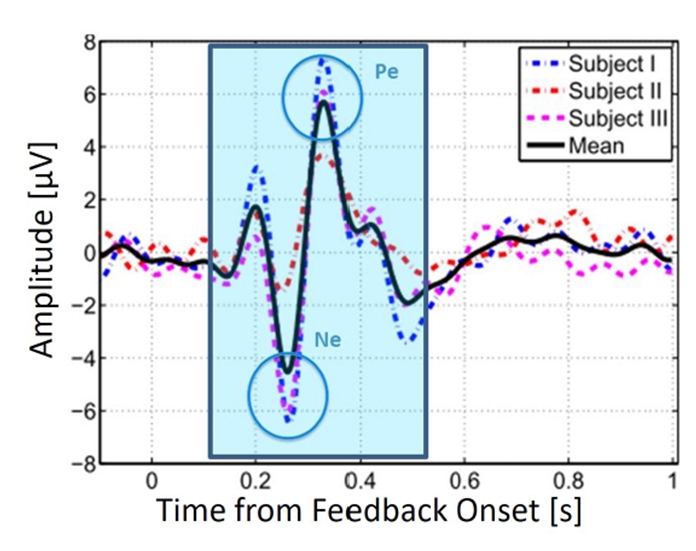

So how does it work? In short, Baxter randomly selects a target and performs a two-stage reaching movement. The first stage is a lateral movement that conveys Baxter’s intended target and releases a pushbutton switch to initiate the EEG classification system. The human mentally judges whether this choice is correct. If so, the system informs Baxter to continue toward the intended target. If, on the other hand, an ErrP is detected, Baxter is instructed to switch to the other target. The second stage of the reaching motion is then a forward reach towards the object. If a misclassification occurs, a secondary ErrP may be generated, since the robot did not obey the human supervisor’s feedback.

Figure 3. The experiment implements a binary reaching task; a human operator mentally judges whether the robot chooses the correct target, and online EEG classification is performed to immediately correct the robot if a mistake is made. The system includes a main experiment controller, the Baxter robot, and an EEG acquisition and classification system. An Arduino relays messages between the controller and the EEG system. A mechanical contact switch detects arm motion initiation.

Robot Control and EEG Acquisition

The control and classification system for the experiment is divided into four major subsystems, which interact with each other as shown in Figure 3. The experiment controller, written in Python, oversees the experiment and implements the chosen paradigm. For example, it decides the correct target for each trial, tells Baxter where to reach, and coordinates all event timing.

The Baxter robot communicates directly with the experiment controller via the Robot Operating System (ROS). The controller provides joint angle trajectories for Baxter’s left 7 degree-of-freedom arm in order to indicate an object choice to the human observer and to complete a reaching motion once EEG classification finishes. The controller also projects images onto Baxter’s screen, normally showing a neutral face but switching to an embarrassed face upon detection of an ErrP.

The EEG system acquires real-time EEG signals from the human operator via 48 passive electrodes, located according to the extended 10-20 international system and sampled at 256 Hz using the g.USBamp EEG system. A dedicated computer uses Matlab and Simulink to capture, process, and classify these signals. The system outputs a single bit on each trial that indicates whether a primary error-related potential was detected after the initial movement made by Baxter’s arm.

The EEG/Robot interface uses an Arduino Uno that controls the indicator LEDs and forwards messages from the experiment controller to the EEG system. It sends status codes to the EEG system using seven pins of an 8-bit parallel port connected to extra channels of the acquisition amplifier. A pushbutton switch is connected directly to the 8th bit of the port to inform the EEG system of robot motion. The EEG system uses a single 9th bit to send ErrP detections to the Arduino. The Arduino communicates with the experiment controller via USB serial.

Experiment codes that describe events such as stimulus onset and robot motion are sent from the experiment controller to the Arduino, which forwards these 7-bit codes to the EEG system by setting the appropriate bits of the parallel port. All bits of the port are set simultaneously using low-level Arduino port manipulation to avoid synchronization issues during setting and reading the pins. Codes are held on the port for 50 ms before the lines are reset. The EEG system thus learns about the experiment status and timing via these codes, and uses this information for classification. In turn, it outputs a single bit to the Arduino to indicate whether an error-related potential is detected. This bit triggers an interrupt on the Arduino, which then informs the experiment controller so that Baxter’s trajectory can be corrected. The experiment controller listens for this signal throughout the entirety of Baxter’s reaching motion.

Training the Neural Network to Detect ErrP Signals

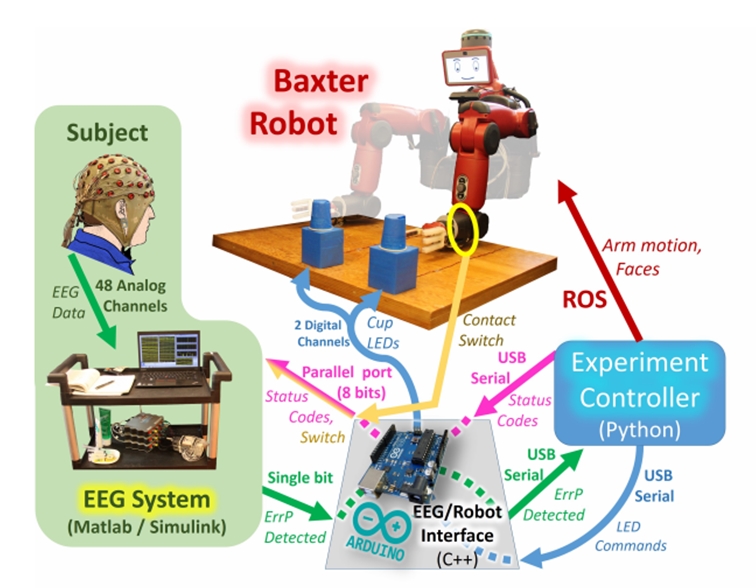

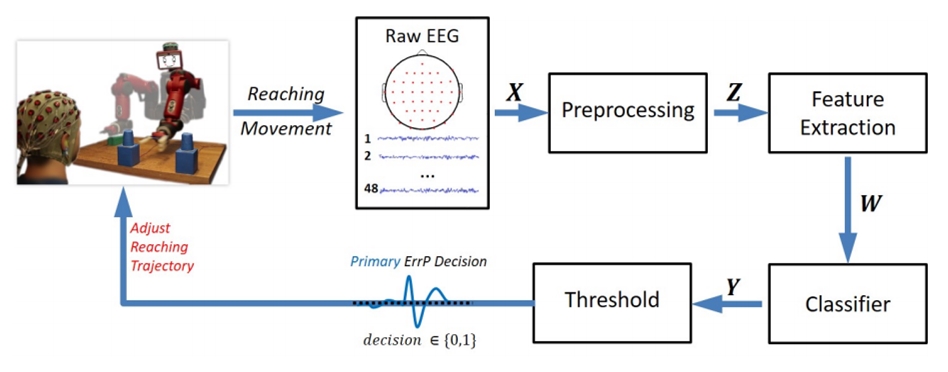

The classification pipeline and feedback control loop are illustrated in Figure 4. The robot triggers the pipeline by moving its arm to indicate object selection; this moment is the feedback onset time. A window of EEG data is then collected and passed through various pre-processing and classification stages. The result is a determination of whether an ErrP signal is present, and thus whether Baxter committed an error. The implemented online system uses this pipeline to detect a primary error in response to Baxter’s initial movement; offline analysis also indicates that a similar pipeline can be applied to secondary errors to boost performance. This optimized pipeline achieves online decoding of ErrP signals and thereby enables closed-loop robotic control. A single block of 50 closed-loop trials is used to train the classifier, after which the subject immediately begins controlling Baxter without prior EEG experience.

The classification pipeline used to analyze the data has a pre-processing step where the raw signals are filtered and features are extracted. This is followed by a classification step where a learned classifier is applied to the processed EEG signals, yielding linear regression values. These regression values are subjected to a threshold, which was learned offline from the training data. The resulting binary decision is used to control the final reach of the Baxter robot.

Figure 4. Various pre-processing and classification stages identify ErrPs in a buffer of EEG data. This decision immediately affects robot behavior, which affects EEG signals and closes the feedback loop.

Want to see it in action? Check out the video.

To learn more, see the full text article here, by Andres F. Salazar-Gomez, Joseph DelPreto, Stephanie Gil, Frank H. Guenther, and Daniela Rus.