Virtual and augmented reality (VR/AR) are the next great frontiers in computing. Like the Internet revolution of the 1990s and the mobile revolution of the 2000s, it represents a radical shift in how we live, work, play, learn, and connect. The next major step in this evolution is the second generation of mobile VR, which is just around the corner. But one major challenge remains – how we interact with it.

It’s hard to imagine the Internet revolution without the mouse and keyboard, or the mobile revolution without the touchscreen. Input is an existential question inherent in each new computing platform, and answering that question means the difference between mainstream adoption and niche curiosity. At Leap Motion, we’ve developed an intuitive interaction technology designed to address this challenge – the fundamental input for mobile VR.

The VR Landscape in 2017 (and Beyond)

View of the HTC Vive headset. Image courtesy of HTC.

We live in the first generation of virtual reality, which consists of two major families: PC and mobile. PC headsets like the Oculus Rift and HTC Vive, running on powerful gaming computers and packed with high-quality displays, enable premium VR experiences. Using external sensors scattered around a dedicated space, your head movements and handheld controllers can be tracked as you walk through virtual worlds. This gives you six degrees of freedom – orientation (pitch, yaw, roll) and position (X, Y, Z).

Mobile headsets like the Gear VR and Google Daydream are not tethered to a computer. Most use slotted-in mobile phones to handle processing demand, so their capabilities are limited compared to their PC cousins. For instance, they have orientation tracking, but not positional tracking. You can look around in all directions, but you can’t move your head freely within the space.

Google Daydream mobile VR headset. Image courtesy of Google.

Despite these limitations, mobile VR has several major advantages over PC VR. Mobile VR is more accessible, less expensive, and can be taken anywhere. The barriers to entry are lower. The user community is already an order of magnitude greater than PC VR – millions, rather than hundreds of thousands.

Finally, and most importantly, product cycles in the mobile industry are much faster than in the PC space. Companies are willing to move faster and take more risks, partly because people are willing to replace mobile devices more often than their computers. This means that second-generation mobile VR will explode many of the first generation’s limitations – with new graphics capabilities, positional tracking, and more.

This brings us back to the critical challenge of 2017. The VR industry is at a turning point where it needs to define how people will interact with virtual and augmented worlds. The earliest devices featured buttons on the headsets and used gaze direction. More recently, we’ve seen little remotes capable of orientation tracking, though these aren’t tracked or represented in 3D virtual space.

By themselves, it’s hard to make these inputs feel compelling. Handheld controllers are acceptable for PC-tethered experiences because people “jack in” from the comfort of dedicated VR spaces. They’re also great optional accessories for certain game genres. But what about airports, buses, and trains? What happens when augmented reality arrives in our homes, offices, and streets?

We Need to Free Our Hands

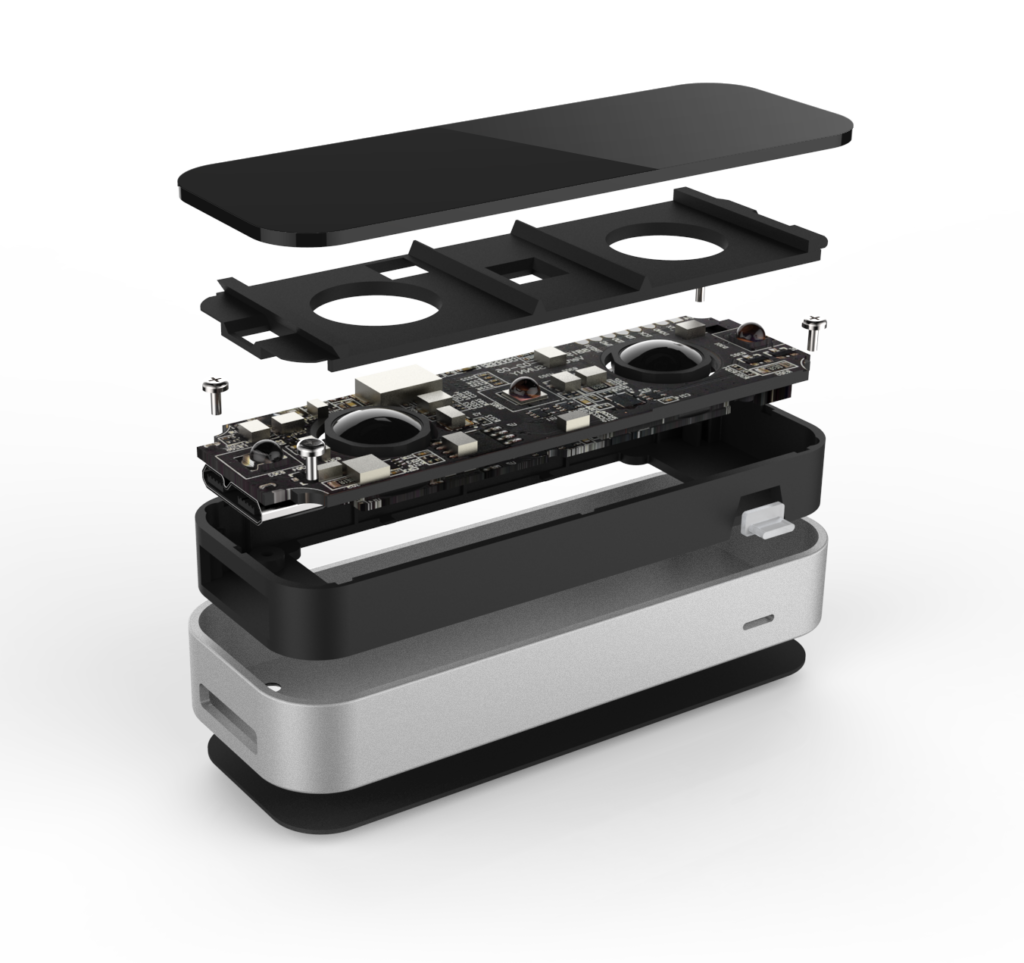

An exploded view of the Leap Motion Controller.

Leap Motion has been working to remove the barriers between people and technology for seven years. In 2013, we released the Leap Motion Controller, which tracks the movement of your hands and fingers. Our technology features high accuracy and extremely low latency and processing power.

At the dawn of the VR revolution in 2014, we discovered that these three elements were also crucial for total hand presence in VR. We adapted our existing technology and focused on the unique problems of head-mounted tracking. In February 2016, we released our third-generation hand tracking software specifically made for VR/AR, nicknamed “Orion.”

Orion proved to be a revolution in interface technology for the space. Progress that wasn’t expected for 10 years was suddenly available overnight across an ecosystem of hundreds of thousands of developer devices. All on a sensor designed for desktop computing.

In December 2016, we announced the Leap Motion Mobile Platform – a combination of software and hardware we’ve made specifically for untethered, battery-powered VR/AR devices.

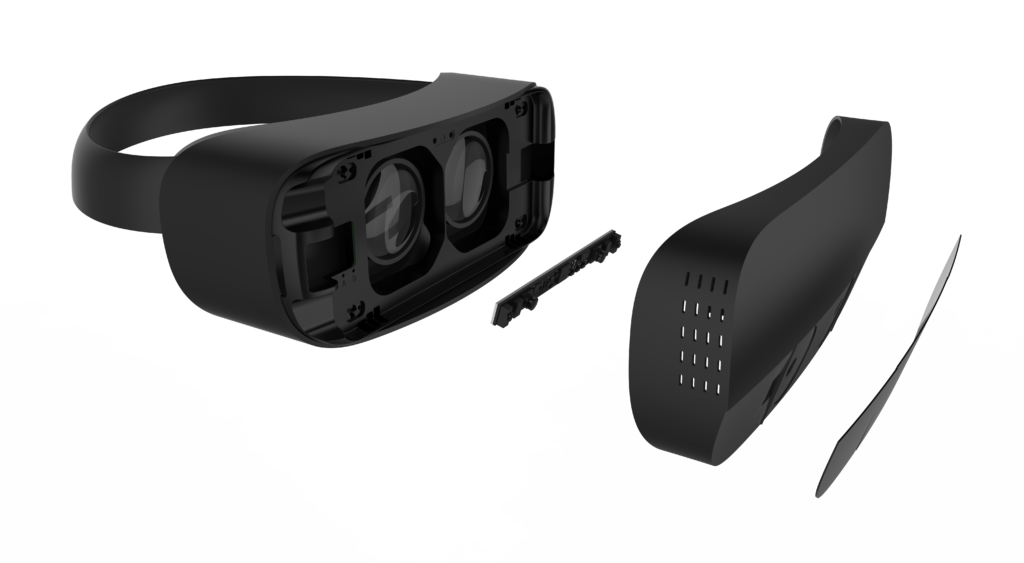

An exploded view of a VR headset with the Leap Motion Mobile VR Platform (second from left) as a core component.

The challenges to build a tracking platform for mobile VR have been immense. We needed a whole new sensor with higher performance and lower power. We needed to make the most sophisticated hand tracking software in the world run at nearly 10 times the speed. And we needed it to be capable of seeing wider and farther than ever, with a field of view (FOV) of 180×180 degrees.

With all that in mind, here’s a quick look at how the technology brings your hands into new worlds.

Hardware

Leap Motion has always taken a hybrid approach with hardware and software. From a hardware perspective, we have built up a premier computer vision platform by optimizing simple hardware components. The heart of the sensor consists of two cameras and two infrared (IR) LEDs. These cameras are placed 64 mm apart to replicate average inter-pupilary distance between a human’s eyes. In order to create the largest tracking volume possible, we use wide-angle fisheye lenses that see a full 180×180° field of view. Because the module will be embedded in a wide range of virtual and augmented reality products, it was important to make it small. Despite the extremely wide field of view, we were able to keep the module under 8 mm in height.

The Leap Motion Mobile VR Platform hardware.

The module is embedded into the front of the headset, and captures images at over 100 frames per second when tracking hands. The LEDs act as a camera flash, pulsing only as the sensor is creating a short exposure. The device then streams the images to the host processor with low latency to be processed by the Leap Motion software.

Software

Once the image data is streamed to the processor, it’s time for some heavy mathematical lifting. Despite popular misconceptions, our technology doesn’t generate a depth map. Depth maps are extraordinarily compute intensive, making it difficult to process with minimal latency across a wide range of devices, and are information-poor. Instead the Leap Motion tracking software applies advanced algorithms to the raw sensor data to track the user’s hand.

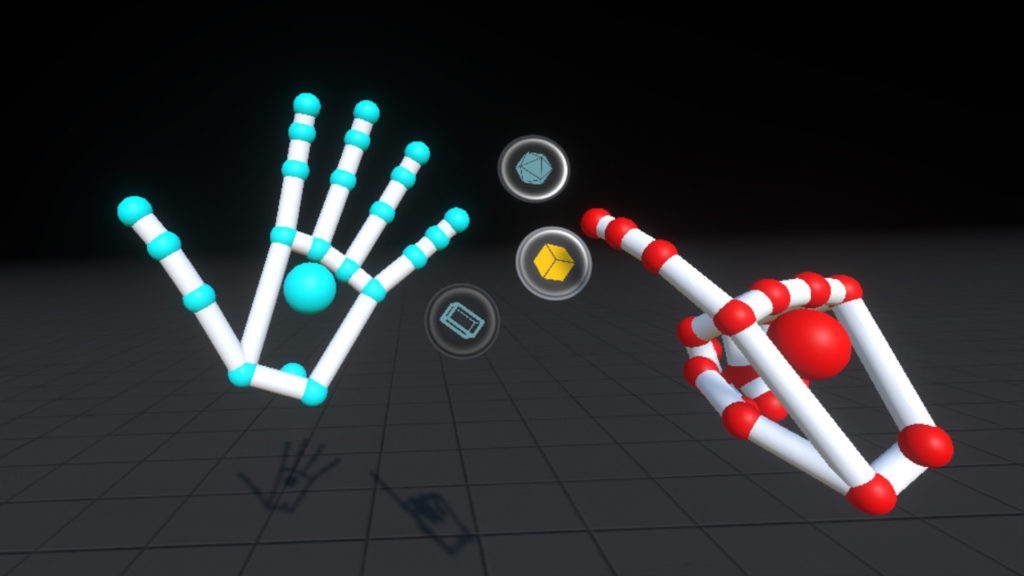

In under three milliseconds, the software processes the image, isolates the hands, and extracts the 3D-coordinates of 26 joints per hand with great precision. Because Leap Motion brings the full-fidelity of a user’s hands into the digital world, the software must not only track visible finger positions, but even track fingers that are occluded from the sensor. To do that, the algorithms must have a deep understanding of what constitutes a hand, so that even not seeing a finger can be just as important to locating the position than if it is fully visible.

After the software has extracted the position and orientation for hands, fingers, and arms, the results – expressed as a series of frames, or snapshots, containing all of the tracking data – are sent through the API.

From there the application logic ties into the Leap Motion input. With it, you can reach into VR/AR to interact directly with virtual objects and interfaces. To make this feel as natural as possible, we’ve also developed the Leap Motion Interaction Engine – a set of parallel physics designed specifically for the unique challenge of real hands in virtual worlds.

A floating hand menu from the PC version of Blocks.

Virtual reality has been called “the final compute platform” for its ability to bring people directly into digital worlds. We’re at the beginning of an important shift as our digital and physical realities merge in ways that are almost unimaginable. Today magic lives in our phones and in the cloud, but it will soon exist in our physical surroundings. With unprecedented input technologies and artificial intelligences on the horizon, our world is about to get a lot more interactive – and feel a lot more human.